The impact of geography on cloud application performance

The ‘cloud’ is one of the great technology branding coups of the last half-century. So incredible in fact that a Google search for just the word ‘cloud’ does not even return a page 1 result for the big, fluffy white blobs in the sky that we all grew-up loving.

Where are the real clouds, Google?

The cloud has taken on the characteristics of its namesake. Amorphous and everywhere. Dynamic and ever-changing. Nebulous and untouchable. Wait, wait … untouchable? Well, that’s not quite true now is it? The last time I was in IAD2, I’m pretty sure I walked smack into a cage of the cloud while checking email on my phone.

The cloud is very much physical. Applications sit inside real servers, housed inside real data centers and exchanges, and interconnected with real fiber. As a result, the physical location of the ‘cloud,’ and how you engage with it, has a very real impact on the performance of your network and applications.

Take for instance a typical enterprise network that ingresses and egresses branch office traffic through one or two centralized data centers when accessing the cloud. If that enterprise is geographically centralized (ie, branch offices located in a specific region), and the primary data center is located near the cloud service provider, the impact to performance and latency can be negligible. For example, a regional insurance provider or bank that has multiple offices supporting the Mid-Atlantic region and routing traffic through a headquarters in the DC metro area is close enough to major cloud exchanges and peering points so as not to see a significant impact on network performance.

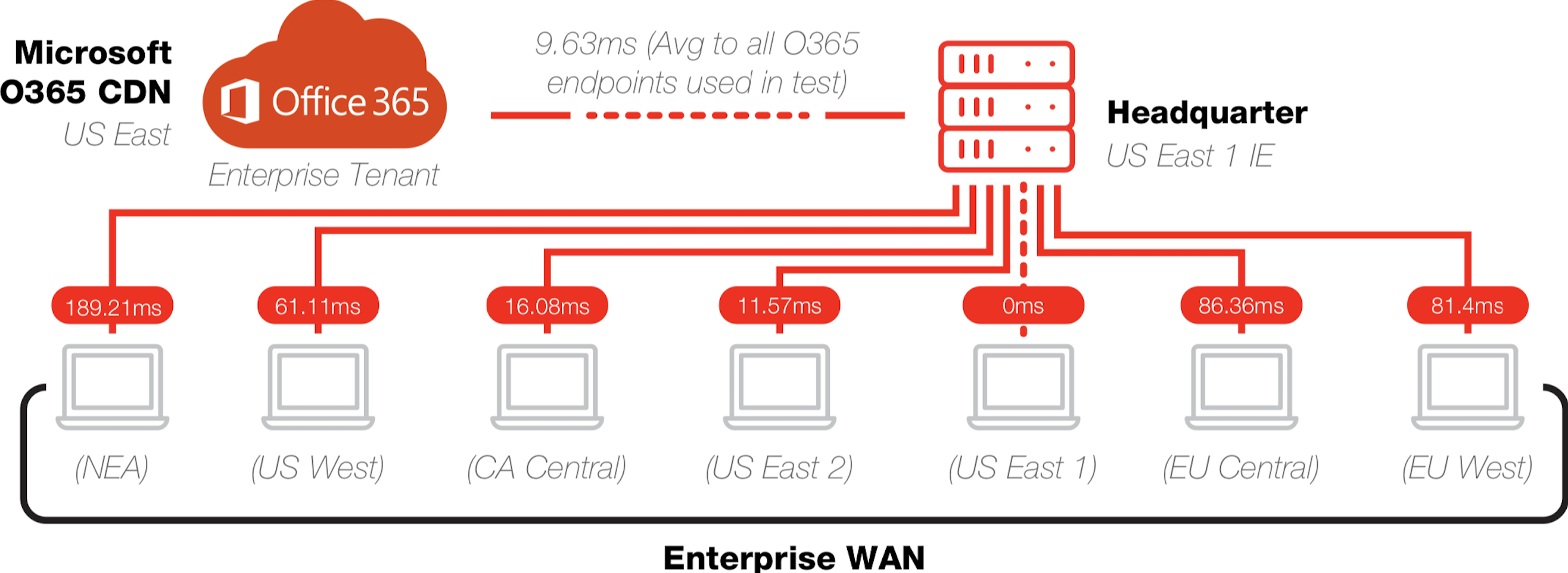

For an enterprise with multiple locations distributed globally though, the impact can be significant. The example below was derived from a network performance test that Apcela conducted for a global enterprise deployment of Office 365. Following the same model of routing traffic through one or two data centers, the impact of geography unfolds.

For users located in Northeast Asia (NEA), routing traffic to the Mid-Atlantic Headquarters is not ideal. Users experienced average latency of 189ms traversing the network, largely driven by the pure physics of routing traffic across the world and then back.

Centralized Network Architecture

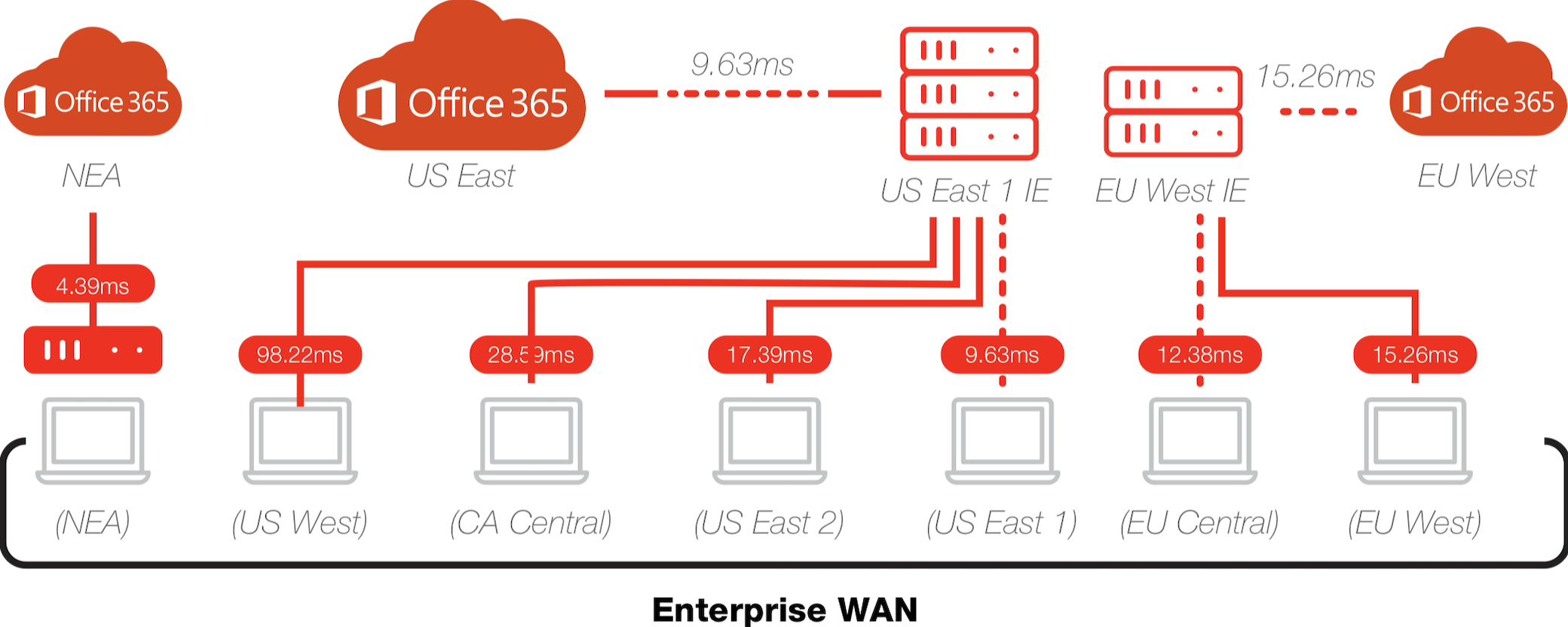

Conversely, that same user could experience a latency of only 4ms if they were able to access a Northeast Asia Office 365 instance. The model below depicts a more modern network architecture in which the enterprise leverages a combination of Cloud Hubs (what Apcela refers to as AppHUBs) and software-defined routing to optimize performance. The result is routing traffic to an appropriate ingress/egress point and ultimately, a 40x improvement in performance.

Hybrid Cloud Network Architecture

While the example here illustrates Office 365, the same geography and math applies to any cloud service. I don’t want to speak for the employee in Northeast Asia, but if I were him or her, I think I would give the CIO responsible for improving the performance of my cloud applications by 40x a very real (but work appropriate) hug.

We love talking about networks. Give us a call or send a note.